PyG 2.5.0: Distributed training, graph tensor representation, RecSys support, native compilation

We are excited to announce the release of PyG 2.5 🎉🎉🎉

Unclaimed project

Are you a maintainer of pytorch_geometric? Claim this project to take control of your public changelog and roadmap.

Changelog

Graph Neural Network Library for PyTorch

Last updated about 1 month ago

AutoGPT is the vision of accessible AI for everyone, to use and to build on. Our mission is to provide the tools, so that you can focus on what matters.

Stable Diffusion web UI

🤗 Transformers: the model-definition framework for state-of-the-art machine learning models in text, vision, audio, and multimodal models, for both inference and training.

A feature-rich command-line audio/video downloader

We are excited to announce the release of PyG 2.5 🎉🎉🎉

PyG 2.5 is the culmination of work from 38 contributors who have worked on features and bug-fixes for a total of over 360 commits since torch-geometric==2.4.0.

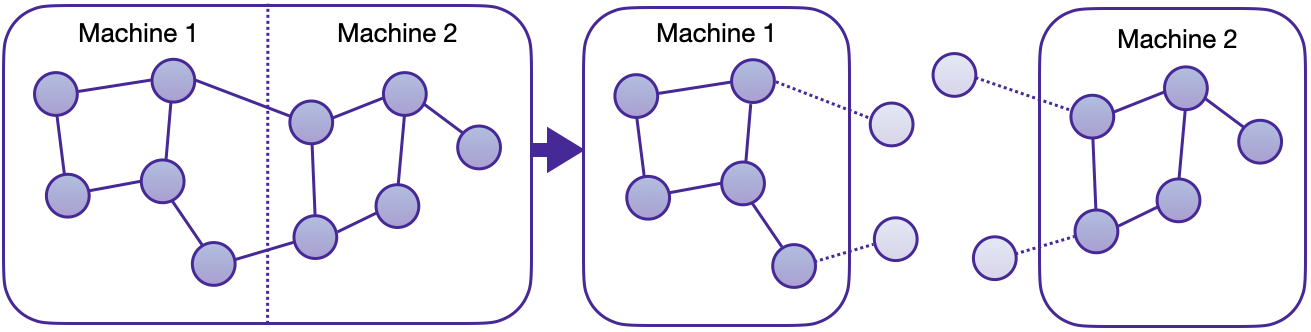

torch_geometric.distributedWe are thrilled to announce the first in-house distributed training solution for PyG via the torch_geometric.distributed sub-package. Developers and researchers can now take full advantage of distributed training on large-scale datasets which cannot be fully loaded in memory of one machine at the same time. This implementation doesn't require any additional packages to be installed on top of the default PyG stack.

GraphStore and FeatureStore APIs provides a flexible and tailored interface for distributing large graph structure information and feature storage.See here for the accompanying tutorial. In addition, we provide two distributed examples in examples/distributed/pyg to get started:

EdgeIndex Tensor Representationtorch-geometric==2.5.0 introduces the EdgeIndex class.

EdgeIndex is a torch.Tensor, that holds an edge_index representation of shape [2, num_edges]. Edges are given as pairwise source and destination node indices in sparse COO format. While EdgeIndex sub-classes a general torch.Tensor, it can hold additional (meta)data, i.e.:

sparse_size: The underlying sparse matrix sizesort_order: The sort order (if present), either by row or columnis_undirected: Whether edges are bidirectional.Additionally, EdgeIndex caches data for fast CSR or CSC conversion in case its representation is sorted (i.e. its rowptr or colptr). Caches are filled based on demand (e.g., when calling EdgeIndex.sort_by()), or when explicitly requested via EdgeIndex.fill_cache_(), and are maintained and adjusted over its lifespan (e.g., when calling EdgeIndex.flip()).

from torch_geometric import EdgeIndex

edge_index = EdgeIndex(

[[0, 1, 1, 2],

[1, 0, 2, 1]]

sparse_size=(3, 3),

sort_order='row',

is_undirected=True,

device='cpu',

)

>>> EdgeIndex([[0, 1, 1, 2],

... [1, 0, 2, 1]])

assert edge_index.is_sorted_by_row

assert edge_index.is_undirected

# Flipping order:

edge_index = edge_index.flip(0)

>>> EdgeIndex([[1, 0, 2, 1],

... [0, 1, 1, 2]])

assert edge_index.is_sorted_by_col

assert edge_index.is_undirected

# Filtering:

mask = torch.tensor([True, True, True, False])

edge_index = edge_index[:, mask]

>>> EdgeIndex([[1, 0, 2],

... [0, 1, 1]])

assert edge_index.is_sorted_by_col

assert not edge_index.is_undirected

# Sparse-Dense Matrix Multiplication:

out = edge_index.flip(0) @ torch.randn(3, 16)

assert out.size() == (3, 16)

EdgeIndex is implemented through extending torch.Tensor via the __torch_function__ interface (see here for the highly recommended tutorial).

EdgeIndex ensures for optimal computation in GNN message passing schemes, while preserving the ease-of-use of regular COO-based PyG workflows. EdgeIndex will fully deprecate the usage of SparseTensor from torch-sparse in later releases, leaving us with just a single source of truth for representing graph structure information in PyG.

Previously, all/most of our link prediction models were trained and evaluated using binary classification metrics. However, this usually requires that we have a set of candidates in advance, from which we can then infer the existence of links. This is not necessarily practical, since in most cases, we want to find the top-k most likely links from the full set of O(N^2) pairs.

torch-geometric==2.5.0 brings full support for using GNNs as a recommender system (#8452), including support for

MIPSKNNIndexf1@k, map@k, precision@k, recall@k and ndcg@k, including mini-batch supportmips = MIPSKNNIndex(dst_emb)

for src_batch in src_loader:

src_emb = model(src_batch.x_dict, src_batch.edge_index_dict)

_, pred_index_mat = mips.search(src_emb, k)

for metric in retrieval_metrics:

metric.update(pred_index_mat, edge_label_index)

for metric in retrieval_metrics:

metric.compute()

See here for the accompanying example.

PyG 2.5 is fully compatible with PyTorch 2.2 (#8857), and supports the following combinations:

| PyTorch 2.2 | cpu | cu118 | cu121 |

|--------------|-------|---------|---------|

| Linux | ✅ | ✅ | ✅ |

| macOS | ✅ | | |

| Windows | ✅ | ✅ | ✅ |

You can still install PyG 2.5 with an older PyTorch release up to PyTorch 1.12 in case you are not eager to update your PyTorch version.

torch.compile(...) and TorchScript Supporttorch-geometric==2.5.0 introduces a full re-implementation of the MessagePassing interface, which makes it natively applicable to both torch.compile and TorchScript. As such, torch_geometric.compile is now fully deprecated in favor of torch.compile

- model = torch_geometric.compile(model)

+ model = torch.compile(model)

and MessagePassing.jittable() is now a no-op:

- conv = torch.jit.script(conv.jittable())

+ model = torch.jit.script(conv)

In addition, torch.compile usage has been fixed to not require disabling of extension packages such as torch-scatter or torch-sparse.

torch_geometric.distributed (examples/distributed/pyg/) (#8713)examples/hetero/temporal_link_pred.py) (#8383)examples/distributed/pyg/temporal_link_movielens_cpu.py) (#8820)examples/multi_gpu/distributed_sampling_xpu.py) (#8032)ogbn-papers100M (examples/multi_gpu/papers100m_gcn_multinode.py) (#8070)examples/multi_gpu/model_parallel.py) (#8309)ViSNet from "ViSNet: an equivariant geometry-enhanced graph neural network with vector-scalar interactive message passing for molecules" (#8287)examples/multi_gpu/distributed_sampling.py) (#8880)torch_geometric.compile in favor of torch.compile (#8780)torch_geometric.nn.DataParallel in favor of torch.nn.parallel.DistributedDataParallel (#8250)MessagePassing.jittable (#8781, #8731)torch_geometric.data.makedirs in favor of os.makedirs (#8421)Package-wide Improvements

mypy (#8254)fsspec as file system backend (#8379, #8426, #8434, #8474)torch-scatter is not installed (#8852)Temporal Graph Support

NeighborLoader and LinkNeighborLoader (#8372, #8428)Data.{sort_by_time,is_sorted_by_time,snapshot,up_to} for temporal graph use-cases (#8454)torch_geometric.distributed (#8718, #8815)torch_geometric.datasets

RCDD) from "Datasets and Interfaces for Benchmarking Heterogeneous Graph Neural Networks" (#8196)StochasticBlockModelDataset(num_graphs: int) argument (#8648)FakeDataset and FakeHeteroDataset (#8404)InMemoryDataset.to(device) (#8402)force_reload: bool = False argument to Dataset and InMemoryDataset in order to enforce re-processing of datasets (#8352, #8357, #8436)TreeGraph and GridMotif generators (#8736)torch_geometric.nn

KNNIndex exclusion logic (#8573)KGEModel.test() (#8298)nn.to_hetero_with_bases on static graphs (#8247)ModuleDict, ParameterDict, MultiAggregation and HeteroConv for better support for torch.compile (#8363, #8345, #8344)torch_geometric.metrics

f1@k, map@k, precision@k, recall@k and ndcg@k metrics for link-prediction retrieval tasks (#8499, #8326, #8566, #8647)torch_geometric.explain

conv.explain = False (#8216)visualize_graph(node_labels: list[str] | None) argument (#8816)torch_geometric.transforms

AddRandomWalkPE (#8431)Other Improvements

utils.to_networkx (#8575)utils.noise_scheduler.{get_smld_sigma_schedule,get_diffusion_beta_schedule} for diffusion-based graph generative models (#8347)utils.dropout_node via relabel_nodes: bool argument (#8524)utils.cross_entropy.sparse_cross_entropy (#8340)profile.profileit("xpu") (#8532)ClusterData (#8438)HeteroData.to_homogeneous() (#8858)InMemoryDataset to reconstruct the correct data class when a pre_transform has modified it (#8692)OnDiskDataset (#8663)DMoNPooing loss function (#8285)NaN handling in SQLDatabase (#8479)CaptumExplainer in case no index is passed (#8440)edge_index construction in the UPFD dataset (#8413)AttentionalAggregation and DeepSetsAggregation (#8406)GraphMaskExplainer for GNNs with more than two layers (#8401)input_id computation in NeighborLoader in case a mask is given (#8312)Linear layers (#8311)Data.subgraph()/HeteroData.subgraph() in case edge_index is not defined (#8277)MetaPath2Vec (#8248)AttentionExplainer usage within AttentiveFP (#8244)load_from_state_dict in lazy Linear modules (#8242)DimeNet++ performance on QM9 (#8239)GNNExplainer usage within AttentiveFP (#8216)to_networkx(to_undirected=True) in case the input graph is not undirected (#8204)TwoHop and AddRandomWalkPE transformations (#8197, #8225)HeteroData objects converted via ToSparseTensor() when torch-sparse is not installed (#8356)add_self_loops=True in GCNConv(normalize=False) (#8210)use_segment_matmul based on benchmarking results (from a heuristic-based version) (#8615)NELL and AttributedGraphDataset are now represented as torch.sparse_csr_tensor instead of torch_sparse.SparseTensor (#8679)torch.sparse tensors (#8670)ExplainerDataset will now contain node labels for any motif generator (#8519)utils.softmax faster via the in-house pyg_lib.ops.softmax_csr kernel (#8399)utils.mask.mask_select faster (#8369)Dataset.num_classes on regression datasets (#8550)Full Changelog: https://github.com/pyg-team/pytorch_geometric/compare/2.4.0...2.5.0